MCP server

Model Context Protocol (MCP)

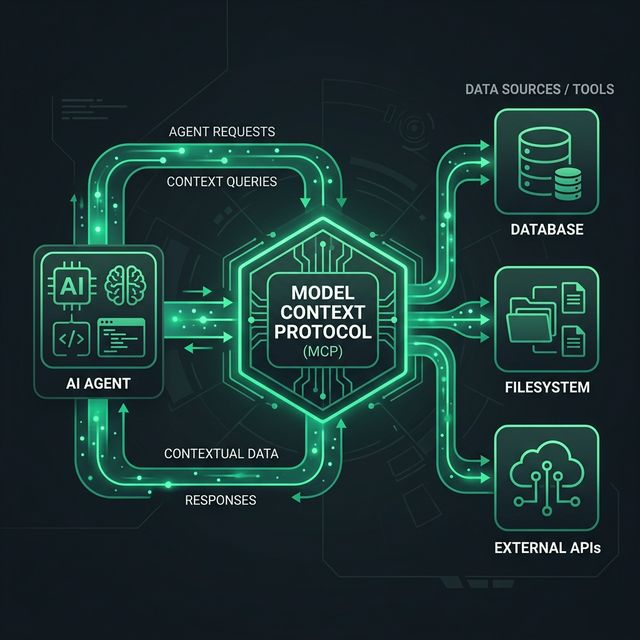

The Model Context Protocol (MCP) is an open standard that enables developers to build secure, two-way connections between their data sources and AI-powered tools. Think of it as a universal "USB-C port" for AI models.

How it works

MCP provides a standardized way for AI agents to discover and interact with external resources. Instead of writing custom integrations for every data source, developers can implement an MCP server that exposes tools, resources, and prompts in a language that AI models can understand.

Key Components

- MCP Client: The AI application (like Antigravity or Claude Desktop) that initiates the connection.

- MCP Server: A lightweight service that exposes specific capabilities (e.g., searching a database, reading a filesystem, or interacting with a GitHub API).

- Protocol: The standardized JSON-RPC-based communication layer that manages the data exchange.

Visual Architecture

The following diagram illustrates the lifecycle of an MCP request:

Benefits of MCP

- Interoperability: One server can work across multiple AI clients.

- Security: Servers can be run locally or within a controlled environment, ensuring the model only accesses the data you explicitly permit.

- Extensibility: Easily add new tools and data sources to your AI's context without re-training the model.

- Rich Context: Allows models to "see" real-time data from files, databases, and APIs directly.

Using MCP in Build and Deploy

In the Build and Deploy ecosystem, we use MCP servers to:

- Analyze codebases directly from the filesystem.

- Query architectural patterns stored in local documentation.

- Interact with CI/CD pipelines and hosting providers like Firebase.

For technical implementation details, visit the Model Context Protocol GitHub.